A well-informed asset strategy is an essential component of developing a novel therapy to meet critical patient needs. Sometimes, we call it New Product Planning (NPP); sometimes, we call it early commercialization. In all cases, it’s critical for the cross-functional program/pipeline team to recognize the importance of defining the aspirational future for the asset when it becomes a therapy, in the market, and impacting patients’ lives. Defining this strategy will guide the clinical plan, the regulatory strategy, and most definitely, the commercial plan.

For years, that early asset strategy work was done by science-driven new product planning experts — people who knew their way around epidemiology datasets, peer-reviewed journal articles, clinicaltrials.gov, and Excel. Now, in 2026, AI tools are changing and elevating how asset strategy work can be done.

That said, the biopharma industry has a long tradition of reaching for new technology before the instructions are fully written; we then often struggle with adoption. AI is no different, and the pressure to quickly “adopt AI” is real, even if nobody is quite sure what this means in practice. The risks are also enormous relative to understanding the datasets, knowing the questions to ask, protecting confidential data, and knowing whether the output is accurate or complete.

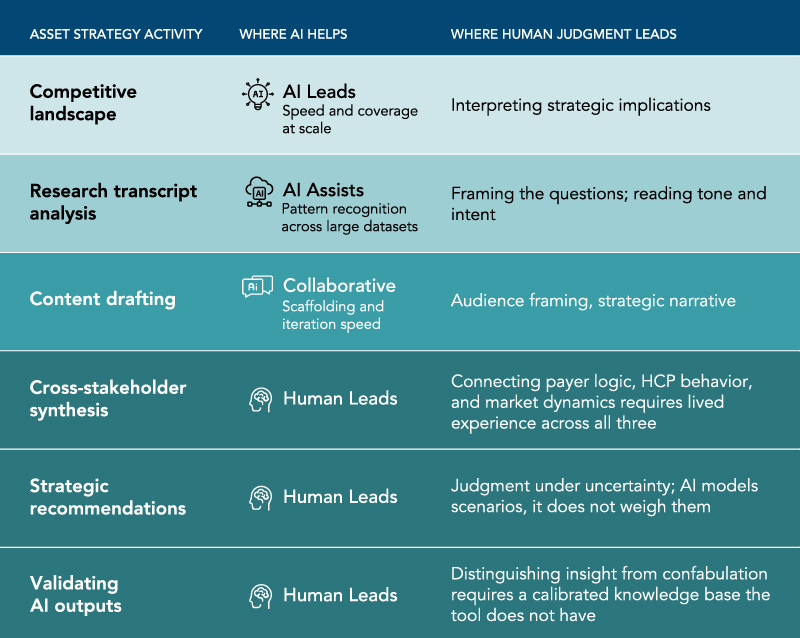

Having now pressure-tested several of these tools, we have a clearer view of where they add genuine horsepower and where a seasoned expert still has to drive. Today’s newsletter shares some of those insights.

[We only conduct our analyses on paid enterprise subscriptions of the latest tools. This ensures confidentiality and data protection and prevents the language models from training on our data. As a company, we intend to evolve our model to ensure our clients benefit from this efficiency while remaining transparent about our use of AI tools.]

Where AI Earns Its Keep

In our experience doing competitive landscape work, AI delivered genuine value. Pulling published clinical data, regulatory history, analyst sentiment, and competitor positioning across a therapeutic area in a fraction of the time a research team would require is a meaningful productivity gain. The breadth of coverage was genuinely impressive, and it freed us to spend time on the analytical layer, which is where the real value for our client is derived.

An AI-powered proprietary research tool presented a more instructive experience:

- Loaded with primary market research transcripts from physician and payer interviews, the initial approach was straightforward: ask for a thematic summary

- The output was what you might expect: organized and coherent, but shallow

- Surface-level pattern recognition across text is a low bar for work that needs to inform commercial strategy

- It’s useful as a starting point for analysis, but it’s nowhere near a final product

What changed the output was changing our approach. Instead of prompting the tool to summarize, we started interviewing it — treating the model as a composite persona representing all the voices in the dataset. We then ask the kind of follow-up questions we’d ask in a live advisory board. “When a patient comes in having failed prior therapy, walk me through how you’re thinking about the next step.”

That framing unlocked something much richer, and the tool became a thinking partner rather than a summarizer.

What the Transcript Can’t Tell You

There is a subtle limitation worth noting: primary market research is a human conversation. A fair amount of what gets communicated in a live interview or recorded session never makes it into the transcript. For example, physicians are a sardonic (SAT word meaning cynical) group. A comment delivered with a raised eyebrow and a half-smile reads entirely differently on video vs. on paper. AI will take those words at face value every time.

Recognizing when a respondent is being wry about payer controls, or signaling professional skepticism through irony, requires having been in the room (or close enough to it), knowing the disease state well enough to understand what’s being implied, and understanding the interpersonal dynamics of how specialists communicate with one another.

When that context is absent, sarcasm becomes data. The AI has no way to flag it, and an inexperienced analyst reviewing the output has no reason to question it. That’s where “a finding” quietly becomes a misdirection that can have huge implications for the asset strategy and the company.

The Prompt Reflects the Prompter

Across it all, one pattern held consistently: the output quality tracked directly to the sophistication of the input. Writing a prompt that yields strategically useful analysis requires knowing the purpose, audience, commercial context, and the right questions to ask. That’s not a technical skill; it’s an experience skill. When we were working through payer dynamics and physician decision-making, the connections that inform product positioning and access strategy didn’t surface until we knew to ask for them. The tool can’t generate those questions on its own or even know that defining those subsequent strategies will be the next step.

There’s also the question of calibration. AI output always sounds confident, whether providing a sharp insight or a straight-up confabulation. Knowing the difference requires an experience-based understanding of how the market actually behaves, built from time spent across drug development, regulatory, commercial, and the clinic. Without that broader business context, it’s hard to know when you’ve arrived at something useful. In today’s changing market, that is even more critical, as dated historical patterns will be less informative.

What We’ve Learned

None of this is intended as a knock on the technology and its transformative properties. It’s simply an honest description of how AI adoption has worked in practice for us so far.

Clearly, AI accelerates the early work, repetitive processes, and initial analyses. It raises the ceiling on what a knowledgeable analyst can produce.

But it doesn’t replace the judgment to truly provide our clients with actionable guidance. Further, using it well requires both context and experience within our industry. It’s not an autonomous tool. At least not yet.

A few important takeaways:

- Understand where and how AI is being utilized in your teams and how different tools add value, including how to use them in combination

- Ensure that AI-experienced individuals are creating the prompts and functional-experienced people are building the strategy

- Confirm ALL sources

- Be transparent, citing the use of AI where appropriate

- Continue to share the learning within the team and with your outside trusted partners

-

Jim has broad industry and consulting experience. His background includes product development strategy, new product and lifecycle planning, global product launches, and market analysis. His considerable knowledge of oncology, orphan diseases, and specialty markets, plus his ability to facilitate engaging workshops, has made Jim a valued asset for our clients.

Read Jim's full bio, here.